A relational database management system

MySQL is an open-source relational database management system based on the SQL programming language. The primary use of MySQL databases is for online storing, but they also work for data warehousing and logging applications. Many database-driven web apps, as well as popular social networking websites, use it. The community edition by Oracle is straightforward and ideal for existing and developing websites.

World’s most popular open-source database

Websites that tackle huge amounts of data need secure databases for storage. MySQL is one of the most widely-used solutions. The main reasons for this are its flexibility, scalability, and the level of data protection it provides.

In the past, MySQL has been used for web backend tasks, primarily for small businesses and startups. However, ever since Oracle purchased it in 2010, it has been adding features to make it suitable for a wide array of users.

Advertisement

Is MySQL easy to learn?

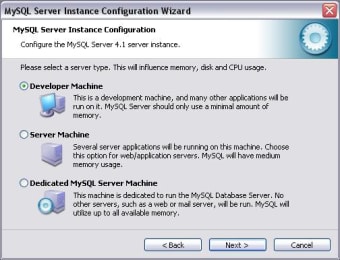

Once you install it, the program exists on your server and begins to store information. Learning how to use MySQL is simple for anyone who's ever tackled similar programs before, thanks to its straightforward user interface.

User interface

This software features graphical user interface tools for managing your databases.

Most popularly, MySQL Workbench is the official MySQL integrated environment, allowing you to administer databases and design their structures graphically. You can get Workbench in the Community and Standard editions.

Adminer is another one of many GUI tools in this program. It comes with an Apache License in the form of a PHP file. It can manage multiple databases and boasts various CSS skins for easier management.

Alternatively, you can interact with MySQL through the command-line interface. It comes with many tools for quick, easy management.

MySQL Utilities is designed to tackle day-to-day maintenance and administration. Percona Toolkit automates repetitive tasks, speeds up your servers, and handles corrupt data. Finally, MySQL Shell is an interactive administration tool that supports JavaScript, SQL, and Python modes.

Features

This program is compatible with cloud infrastructure and distributed computing, creating a distributed and secure system of MySQL clusters by supporting cloud servers.

It also features broad compatibility with all modern programming languages and management interface servers. As such, it enables you to share data existing in other bases, as well.

MySQL runs on all operating systems and platforms in popular use. As an extra, Oracle helpfully provides installers for Windows, Mac, and Linux.

Finally, it enables the seamless portability of queries among databases.

Functions

MySQL boasts many functions for database management. For example, you can enable database triggers for different functions, and cursors for managing the data itself.

The information schema provides you with a read-only view of all the tables and information in a database, and the performance schema collects statistics about performance for monitoring.

As it concerns security, MySQL features full SSL support to keep your data safe.

Is MySQL free?

You can get MySQL community edition free of charge for individual and commercial use. However, it comes with restrictions on support and updates.

Alternatively, there are four plans to choose from depending on your needs. Standard, Enterprise, Classic, and Cloud Service are available options, with or without embedded databases. Note that MySQL Enterprise is the most popular among users.

Cloud deployment

As mentioned above, MySQL can run on cloud computing platforms instead of hardware.

Some of these platforms offer the program 'as a service,' which allows application owners to bypass installation and maintenance of their databases themselves. Instead, the service performs the task and app owners pay.

Alternatives

Although MySQL is the most popular choice for database management out there, there are other free, open-source alternatives that, in some cases, beat the features of this program.

MariaDB is the ideal replacement for those looking to avoid MySQL. Created by the original developers, it's used by some of the most popular websites on the Internet. This alternative features the same core components, as well as alternate storage engines and server optimization functions.

Postgre SQL is highly customizable and runs on multiple programming languages, although it lacks the community support of the more popular options. SQLite is fantastic for IoT, cellphones, MP3 players, and the like.

A fantastic solution

For years, MySQL has been industry-standard. With its new clustering, cloud computing compatibility, and extended integrations, it is currently ideal as a corporate solution as well. You cannot go wrong with this selection.